Good Systems

The Technology and Information Policy Institute is a participant in Good Systems research for two projects, Ethical Data Design and Disinformation.

Ethics, Values, and A.I.

“Technology is neither good nor bad; nor is it neutral.” This is the first law of technology, outlined by historian Melvin Kranzberg in 1985. It means that Technology is only good or bad if we perceive it to be that way based on our own value system. At the same time, because the people who design technology value some things more or less than others, their values influence their designs.

We use that technology — and, increasingly, artificial intelligence — to entertain us, communicate, get places faster, make predictions, swipe left or right, protect our homes, solve complex problems quickly and easily. In short, A.I. is changing the way we do everything because it’s everywhere — from dating apps to the most advanced military technology.

But because technology is never neutral, it has the capacity to be harmful to us in ways we might not intend or predict. The difficulty for us, as scientists and engineers, is that A.I. is helpful.

It can do many things faster, better, and easier than humans, and humans reap the rewards. But how will A.I. affect society, work, and how we interact with others? We need to answer these questions proactively rather than waiting for bad things to happen and reacting after it’s already too late.

In the words of Michael Crichton’s “Jurassic Park” mathematician, “Your scientists were so preoccupied with whether or not they could, they didn’t stop to think about if they should.”

CAN WE ENSURE THAT A.I. PROTECTS HUMANITY, NOT DESTROYS IT?

That’s the question we have to ask now: Should we? How can we ensure that advances to A.I. are beneficial to humanity, not detrimental? How can we develop technology that makes life better for all of us, not just some? What unintended consequences are we overlooking or ignoring by developing technology that has the power to be manipulated and misused, from undermining elections to exacerbating racial inequality?

Our goal is to provide a way for prosocial values to drive the design of artificial intelligence in autonomous and semi-autonomous technologies so that those systems both protect and improve society.

Technology is neither good nor bad; nor is it neutral.

Research Strategy

Introducing Bridging Barriers during his State of the University Address in 2016, President Fenves stated, “The toughest questions facing humanity and the world cross the boundaries of existing knowledge, and we must take a convergence approach to address them . . . Breakthroughs happen when we break down silos of knowledge. And we are doing that now.” Good Systems aims to embody this approach by bringing about a true convergence of disciplinary perspectives, one that is both symbiotic and synergistic. Connecting our disciplines into new networks, we aim to achieve a new intensity of symbiosis across the University, as each of our disciplinary perspectives benefits from insights from other disciplines.

Phase 1 Goal

The goal of Good Systems in Phase I will be to conduct basic research on how to define, evaluate, and build good systems. Experts from a wide range of fields, including humanists, social scientists, and technologists, are needed to achieve convergent research. While other initiatives around the world are beginning to explore the ethical implications of AI, UT’s Good Systems is distinctive with respect to the wide range of fields engaged and the degree to which these fields converge via mutual respect and understanding.

What’s New

Huijeong Yeon’s Fulbright Journey at UT Austin

In an era where technology and policy are increasingly intertwined, Huijeong Yeon—a distinguished Fulbright scholar with eight years of experience in the South Korean government—is utilizing her time at the University of Texas at Austin to bridge the gap between...

The Business of Surveillance

In the summer of 2022, Good Systems generously supported three undergraduate students to work with the Being Watched team. We were pleased to have Helen Kang (Informatics ’25), Abha Misaqi (Economics ’25), and Zak Turner (Mechanical Engineering ’23) working on various...

Surveillance Ordinances in Five U.S. Cities

In the summer of 2022, Good Systems generously supported three undergraduate students to work with the Being Watched team. We were pleased to have Helen Kang (Informatics '25), Abha Misaqi (Economics '25), and Zak Turner (Mechanical Engineering '23) working on various...

TIPI @ Good Systems 2022 Annual Symposium

In April 2020, Good Systems held its 2022 annual symposium. Members of the Being Watched project participated in several sessions, including a poster & mingling session held by the team on April 7, a core project presentation by Dr. Sharon Strover and Dr. Atlas...

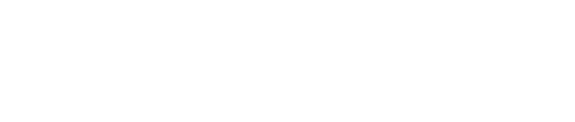

New Disinformation Research

Russian interference in the 2016 US presidential election has expanded discussions about the role of misinformation in politics as well as people’s information behavior. While many studies have been conducted to unravel the strategies and impacts of the Russian IRA’s...

Shiny Things Workshop

2020-02-21 9:00 a.m. - 5:00 p.m. There is so much buzz about artificial intelligence, but what’s real and what’s fiction? In this hands-on session about AI’s effects on science and society, Harvard researcher and artist Sarah Newman will help us explore...

Disinformation Group Meeting

12:00 p.m. in FAC 402 As part of our Good Systems work, Talia Stroud, Director of the Center for Media Engagement, Mary Neuburger, Chair of the Department of Slavic and Eurasian Studies, and Sharon Strover proposed to host monthly “Disinformation Network” meetings to...

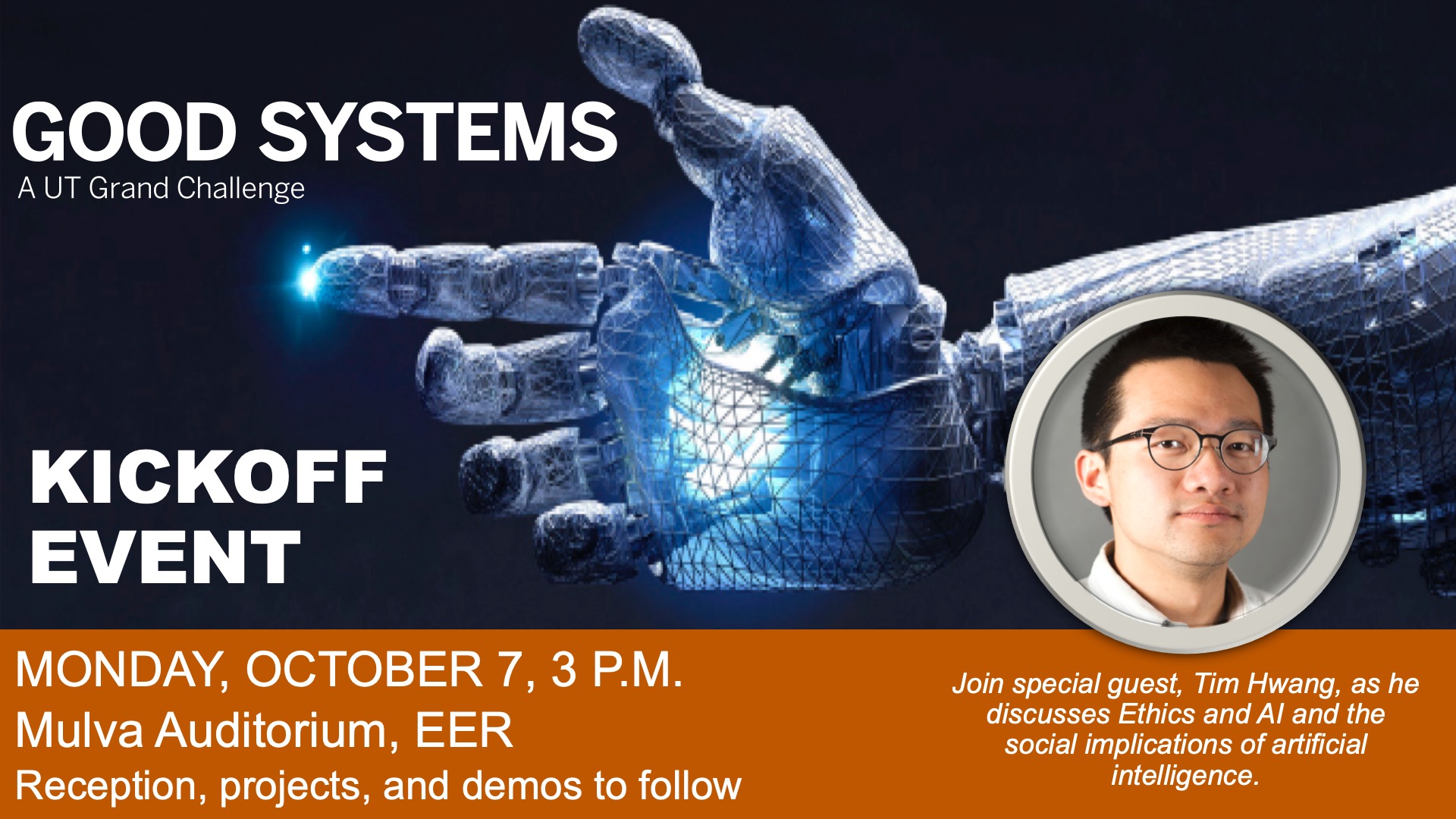

The Grand Challenge of Ethics and AI: A Fireside Chat with Tim Hwang

2019-10-07 3 p.m. Good Systems is hosting a fireside chat with Tim Hwang, director of the Harvard-MIT Ethics and Governance of AI Initiative, and Professor Sharon Strover from the Moody College of Communication. Dubbed “The Busiest Man on...

AI Is Tricky: An Interview with Tim Hwang

When people hear the term artificial intelligence or AI, there may be a tendency to think of the latest “Terminator” movie or fall for the hype of menacing machines controlling our existence and destroying humanity. Tim Hwang, a lawyer, writer, and researcher working...

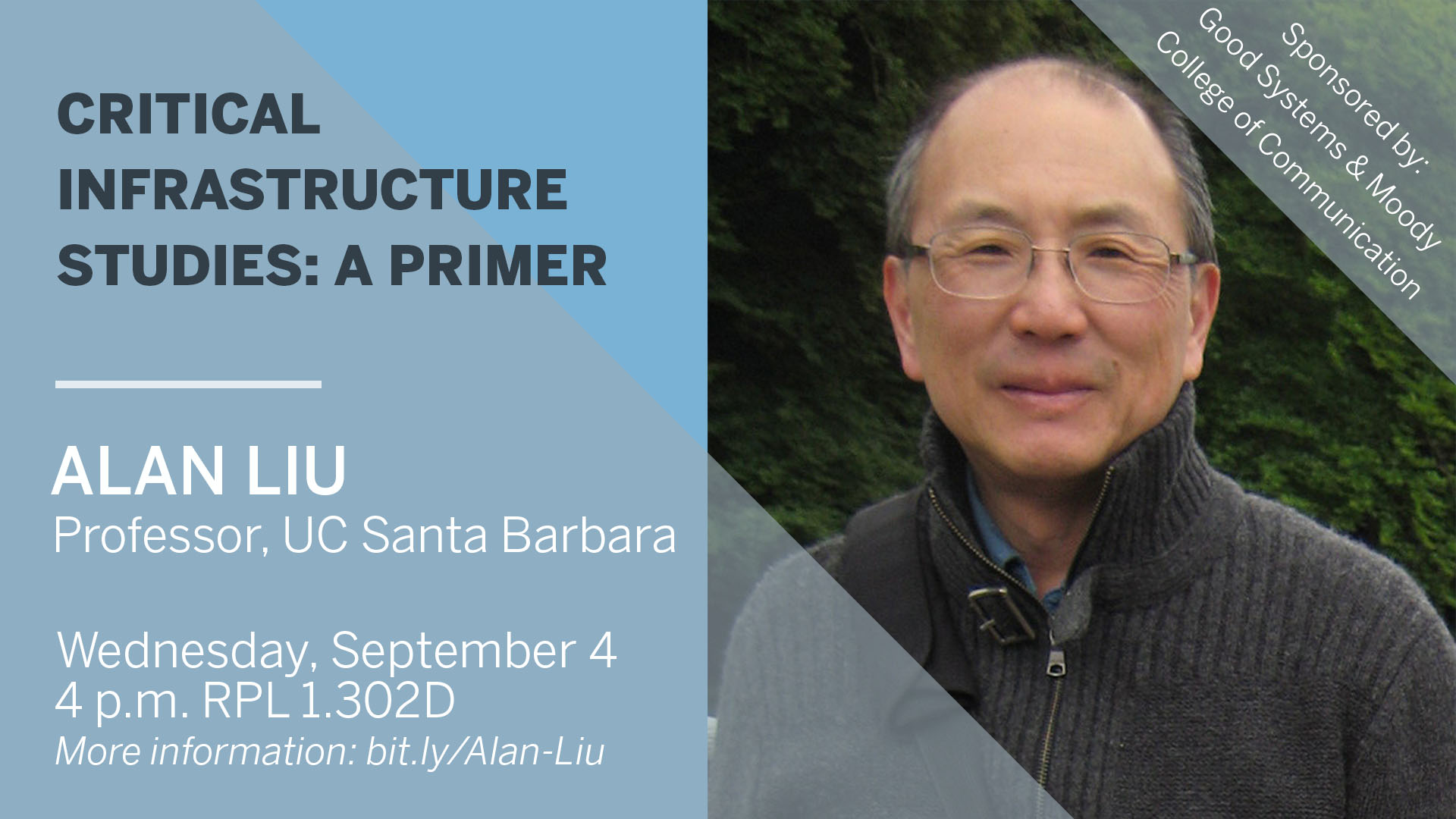

Critical Infrastructure Studies: A Primer

2019-09-04, 4 - 6 p.m., Patton Hall (RLP), 1.302D 305 23RD ST E, Austin, Texas 78712 Abstract: What have been the main approaches to the study of infrastructure that now combine to make the topic of such compelling socio-political, technological,...